A CFC@LDN Fan

A CFC@LDN FanHi, my name is CHENG YE(程烨,程ヨウ). I am now a first year master student at the Kyoto University, where I conduct research in the Data Engineering and Platform Research Group, advised by Prof. Kazuyuki Shudo. I received my B.Eng. degree from the Kansai University, advised by Assoc. Prof. Adachi Naotoshi.

My research interests lie broadly in the Federated Learning and Blockchain. I am also interested in climbing, football, and working out.

Warning

Problem: The current name of your GitHub Pages repository ("Solution: Please consider renaming the repository to "

http://".

However, if the current repository name is intended, you can ignore this message by removing "{% include widgets/debug_repo_name.html %}" in index.html.

Action required

Problem: The current root path of this site is "baseurl ("_config.yml.

Solution: Please set the

baseurl in _config.yml to "Education

-

Kyoto Universitygraduate school of informatics

Kyoto Universitygraduate school of informatics

Master StudentApril. 2026 - March. 2028 -

Kansai UniversityB.Eng. :Department of Civil, Environmental and Applied Systems EngineeringApril. 2021 - March. 2026

Kansai UniversityB.Eng. :Department of Civil, Environmental and Applied Systems EngineeringApril. 2021 - March. 2026

Language

-

Chinesenative

Chinesenative -

Japaneseconversational

Japaneseconversational -

Englishread & listen

Englishread & listen

News

Selected Publications (view all )

GOFA: Gradient-Oriented Backdoor Attack in Vertical Federated Learning

Ye CHENG, Adachi Naotoshi

International Conference on Electrial Computer and Energy Technologies 2026 ICECET2026

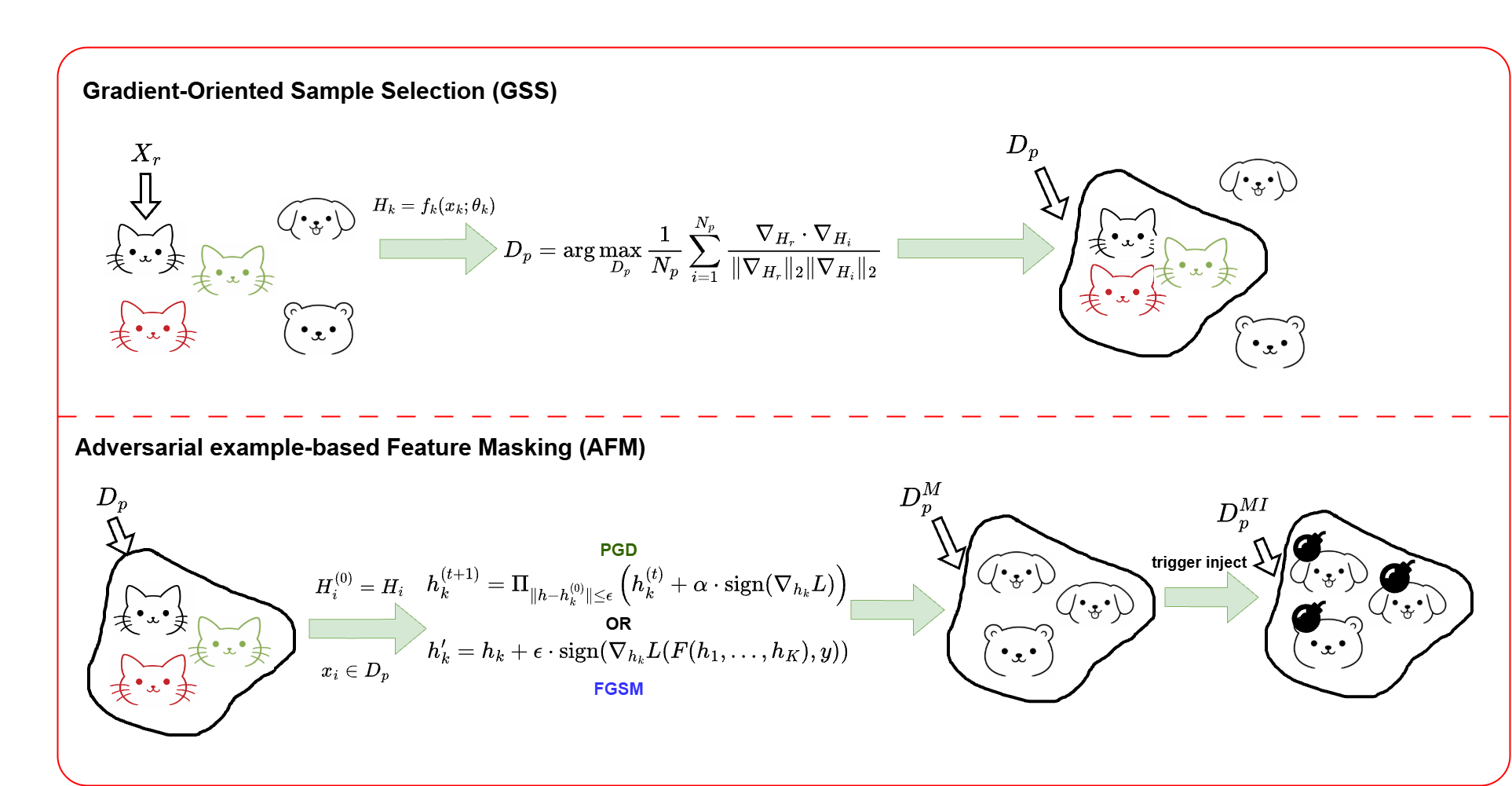

Vertical federated learning (VFL) enables multiple organizations with disjoint feature spaces and overlapping sample identities to collaboratively train machine learning models without sharing raw local data. Despite this privacy-preserving paradigm, VFL remains vulnerable to backdoor attacks. In particular, a malicious passive party can inject carefully crafted triggers into local inputs or intermediate embeddings, causing targeted mispredictions during inference. Existing VFL backdoor attacks (e.g., BadVFL) typically assume that the malicious client has additional knowledge of task labels, which conflicts with the core privacy assumptions of VFL. In this paper, we propose GOFA, a gradient-oriented backdoor attack for VFL. GOFA leverages server-provided gradient feedback to construct a poisoned dataset and applies adversarial-example techniques (e.g., FGSM) to mask original features and strengthen trigger learning. Experiments on CIFAR-10 and UCI-HAR demonstrate the effectiveness of our method across multiple settings.

GOFA: Gradient-Oriented Backdoor Attack in Vertical Federated Learning

Ye CHENG, Adachi Naotoshi

International Conference on Electrial Computer and Energy Technologies 2026 ICECET2026

Vertical federated learning (VFL) enables multiple organizations with disjoint feature spaces and overlapping sample identities to collaboratively train machine learning models without sharing raw local data. Despite this privacy-preserving paradigm, VFL remains vulnerable to backdoor attacks. In particular, a malicious passive party can inject carefully crafted triggers into local inputs or intermediate embeddings, causing targeted mispredictions during inference. Existing VFL backdoor attacks (e.g., BadVFL) typically assume that the malicious client has additional knowledge of task labels, which conflicts with the core privacy assumptions of VFL. In this paper, we propose GOFA, a gradient-oriented backdoor attack for VFL. GOFA leverages server-provided gradient feedback to construct a poisoned dataset and applies adversarial-example techniques (e.g., FGSM) to mask original features and strengthen trigger learning. Experiments on CIFAR-10 and UCI-HAR demonstrate the effectiveness of our method across multiple settings.